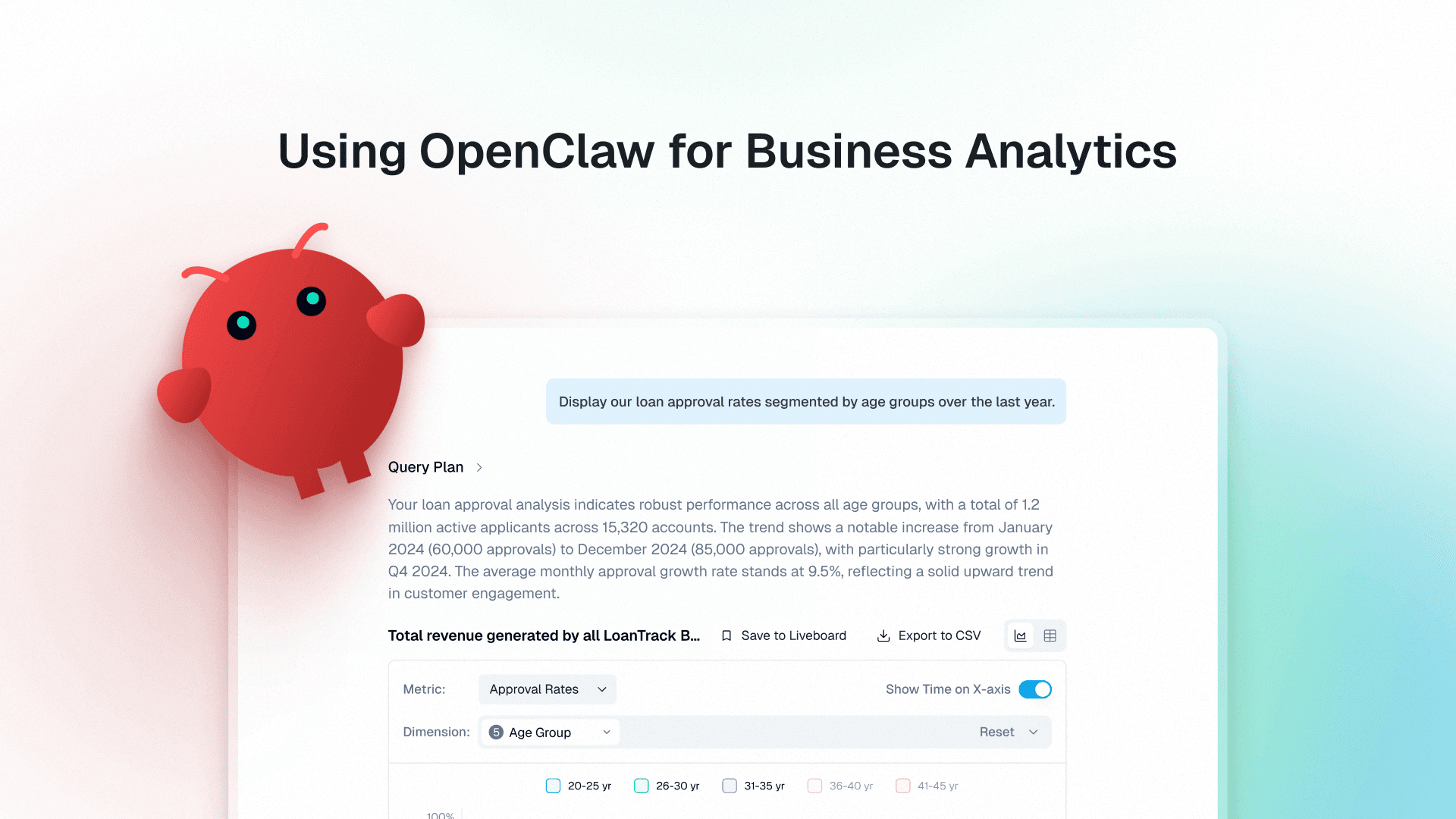

Every enterprise today wants the same capability: to talk to its data.

Executives want answers without dashboards. Operators want insights without writing SQL. Analysts want faster exploration across systems. The promise of conversational AI and text-to-SQL appeared to finally unlock this vision of allowing anyone to ask questions in natural language and receive actionable insights instantly.

Many organizations initially attempted to build this capability using MCP-based architectures layered on top of enterprise databases.

Across multiple enterprise deployments, including several Fortune 500 organizations we worked with, these approaches consistently struggled with reliability, latency, cost, and correctness. In one large F500 deployment, the system failed in nearly 93% of queries. In another case, a major pharmaceutical company discontinued its pilot after similar results.

This article examines why MCP-based architectures struggle for conversational analytics, and what architectural patterns work better.

How Are MCPs Used Today for Conversational Analytics

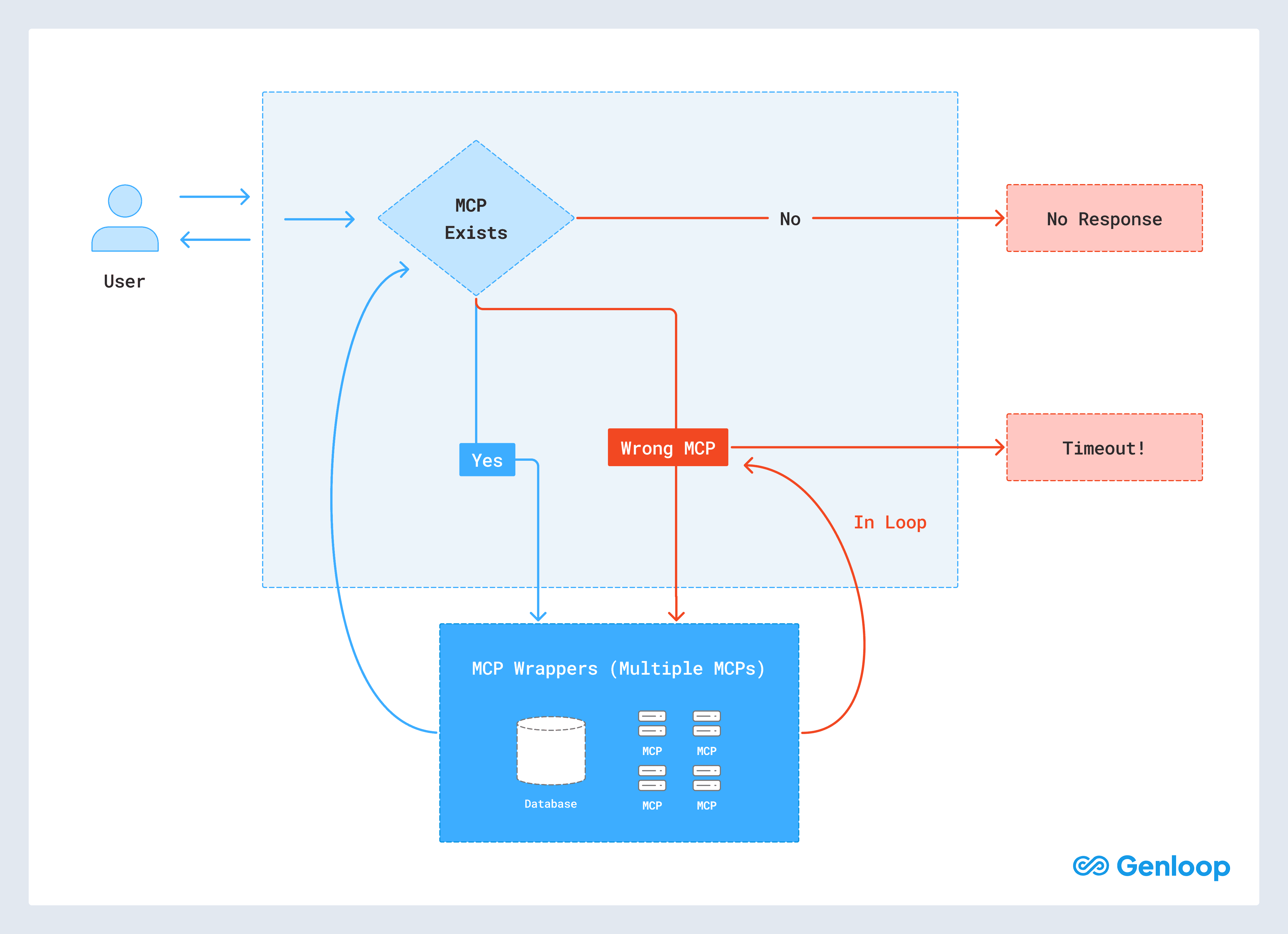

Most enterprise MCP implementations follow a similar architecture.

A user asks a question in natural language.

The request is routed to an AI agent.

The agent evaluates available MCP servers, each representing access to a dataset or capability.

The agent selects an MCP server to call.

The MCP server returns a data payload.

The language model interprets the returned data.

The model decides the next action.

The process repeats until an answer is produced.

Agentic System with MCP: User queries flow through an AI agent that repeatedly calls MCP servers. The system receives large intermediate payloads when broad MCPs are used, or rejects the answer when no MCP exists.

In practice, MCP servers act as middleware between AI systems and enterprise data systems. Instead of allowing the model to reason directly over databases, the system forces interaction through predefined abstractions.

While this structure appears modular, it introduces several structural limitations.

What Are the Issues With MCP for Conversational Analytics

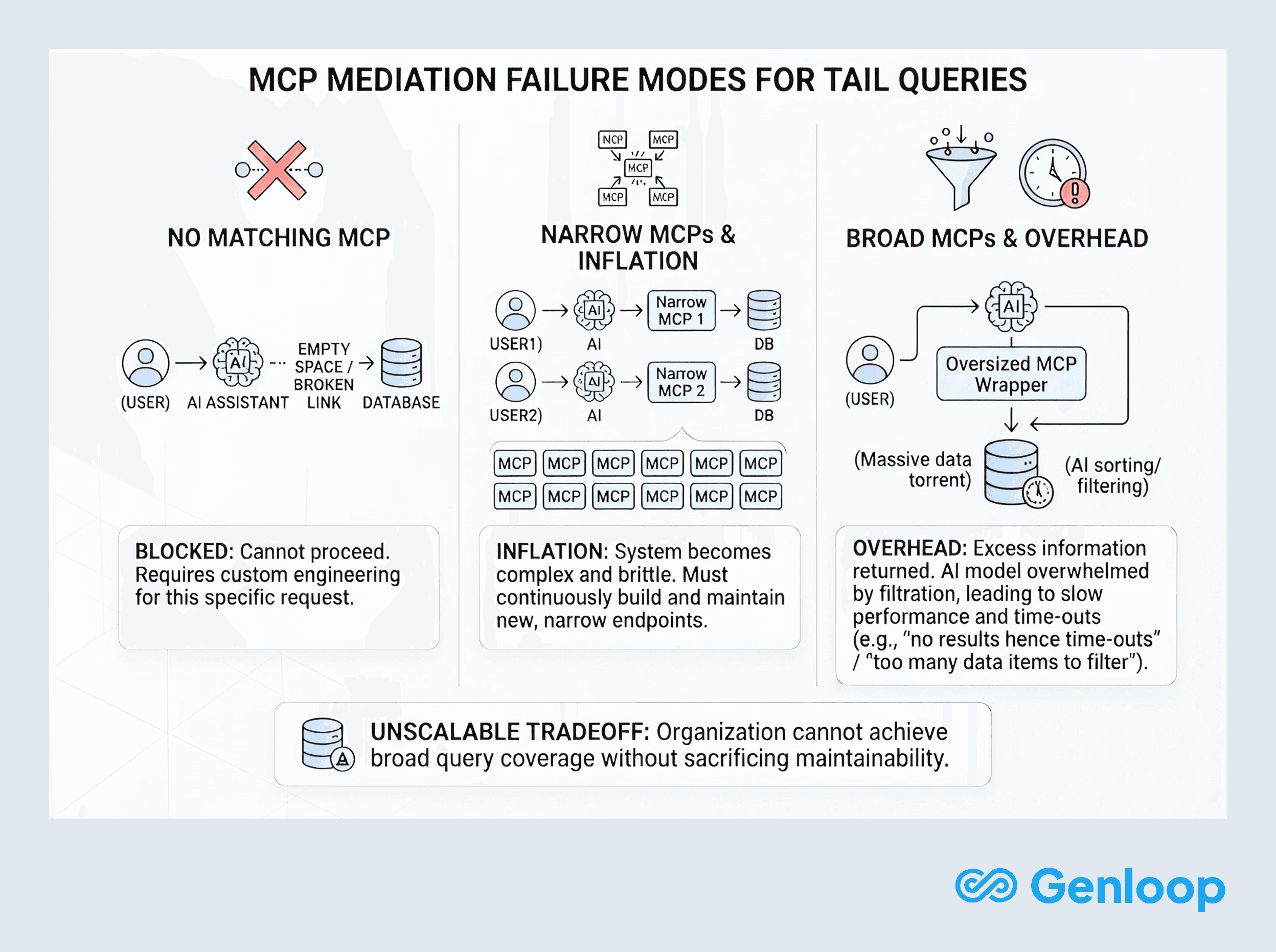

The core limitation of MCP architectures is structural: they prevent AI systems from interacting directly with enterprise data.

When an MCP does not exist for a particular question, the assistant cannot proceed. When an MCP is too broad, it returns large amounts of data that must be filtered by the model. When it is too narrow, engineering teams must constantly build new endpoints.

These constraints undermine the goal of conversational analytics.

1. Limited Coverage

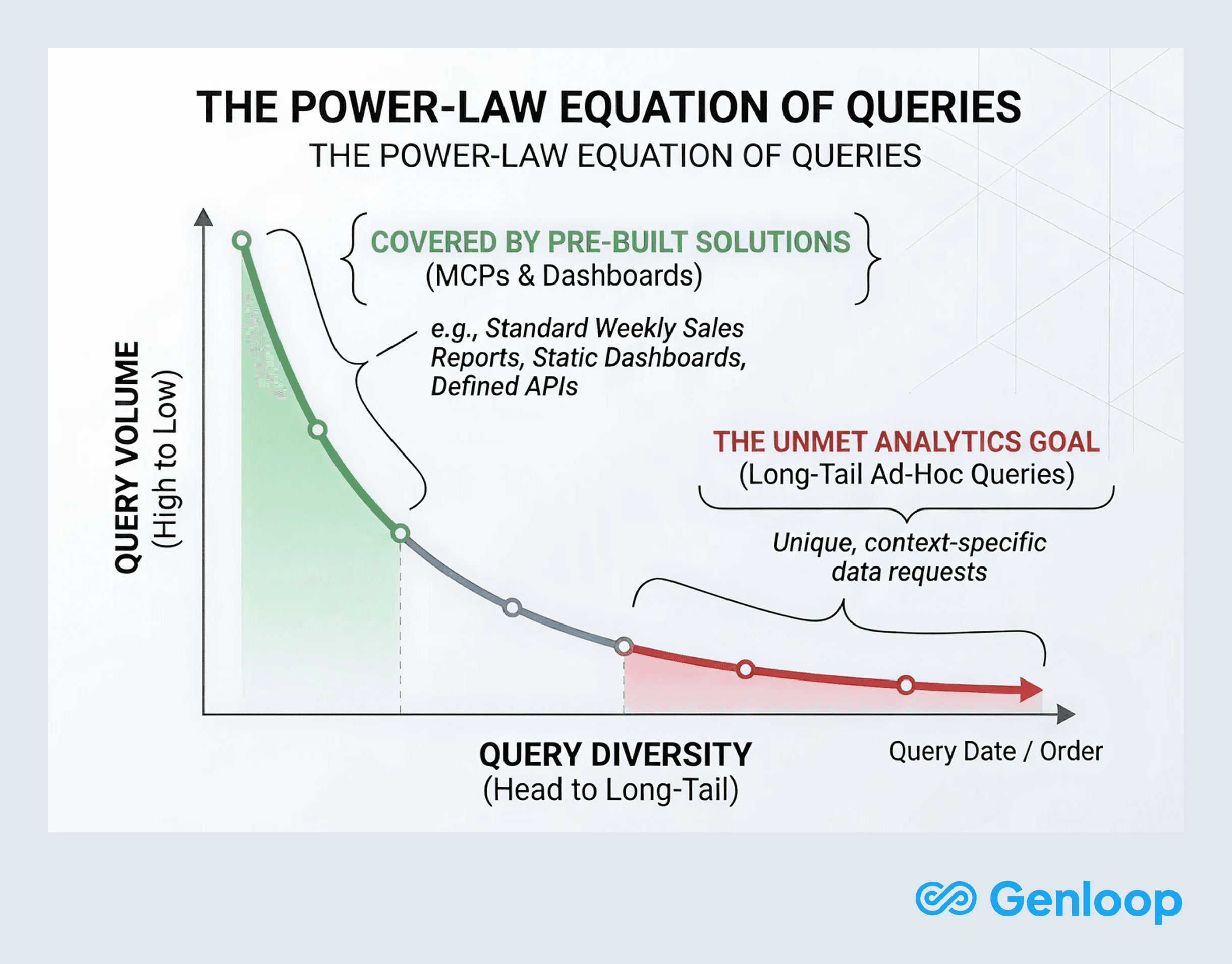

Conversational analytics exists primarily to answer long-tail questions that fall outside predefined reporting patterns.

Creating MCP endpoints for every possible analytical request is not scalable. As a result, many questions simply fall outside the system’s capabilities. In production deployments, MCP-based systems frequently time out, fail to respond, or produce incorrect outputs when confronted with these queries.

The architecture works well for predefined workflows but struggles with open-ended analysis.

2. Lack of Business Context

Enterprise questions rarely involve a single dataset.

Questions such as “Why did this customer’s invoice change last month?” require knowledge of contracts, billing rules, onboarding flows, operational exceptions, and usage patterns. This information often spans structured databases, documentation, and internal operational systems.

Traditional MCP architectures do not encode this business memory. As a result, the agent searches across datasets without sufficient context. This frequently leads to hallucinated explanations, incorrect joins, or execution loops that fail before producing an answer.

3. Latency and Cost

Each MCP call introduces additional reasoning steps, tokens, and network overhead.

Multi-step analytical questions, which represent a large share of enterprise queries, require several MCP interactions. As the chain of calls grows longer, systems become slower and more expensive to operate. Reliability drops sharply as execution chains increase in length.

What begins as a clean abstraction often becomes fragile orchestration.

Across deployments, several patterns consistently appear.

Dimension | MCP based Data Talking |

|---|---|

Reliability | Timed out on ~93% of runs; frequent failures and low user trust. |

Accuracy & depth | Shallow answers, frequent hallucinations, struggled with multi‑step questions. |

Scale & maintenance | High developer effort to design, ship, and maintain MCPs for each new use case. |

Coverage | Limited to predefined MCP capabilities. |

Capabilities | Primarily simple point-in-time lookups. |

Latency | P95 more than 30 seconds |

Cost | MCP overheads inflate cost |

What Is the Best Approach for Conversational Analytics

Across deployments, one lesson becomes clear:

AI should not talk to abstractions of data. It should talk to data itself.

A reliable conversational analytics system should behave less like a chatbot calling tools and more like a skilled analyst working directly with enterprise systems.

Instead of routing requests through middleware layers, the system should follow a different pattern:

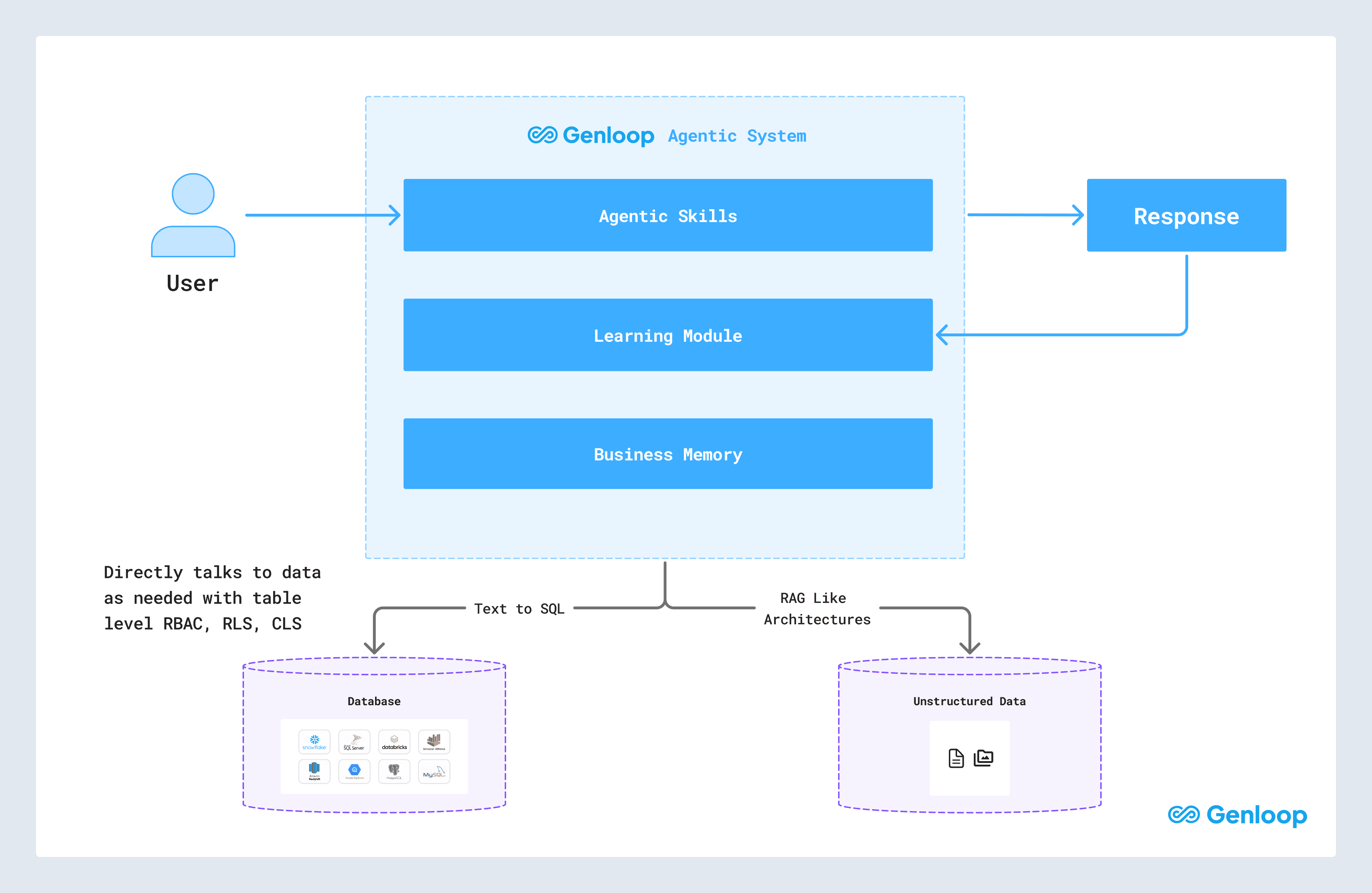

1. Build a unified enterprise memory

The system constructs a structured understanding of the enterprise environment. This includes databases, time-series systems, documents, operational rules, and business processes.

2. Reason over that memory

When a user asks a question, the model reasons over this unified representation to determine what data is required.

3. Execute deterministically

The system then executes directly against live data sources.

Structured data is accessed through text-to-SQL execution

Unstructured sources are retrieved using RAG-style retrieval architectures

Separating reasoning from execution ensures that analytical workflows remain deterministic and auditable, while still allowing natural language interaction.

In several enterprise deployments we participated in, including implementations built using Genloop, this architecture proved significantly more reliable than MCP-based approaches. By allowing the system to reason directly over enterprise data structures rather than through middleware abstractions, conversational queries translated more consistently into correct analytical execution.

The proposed approach: Build a unified business memory, reason over that, and directly talk to data through deterministic execution.

How Does Genloop’s Proposed Way Compare to MCP Approach

Across deployments, several patterns consistently appeared.

Dimension | MCP based Talking-to-data | Direct Talking-to-data |

|---|---|---|

Reliability | Timed out on ~93% of runs; high failure rates | Stable execution with near-zero timeouts |

Accuracy & depth | Shallow answers; hallucinations in complex queries | High-fidelity answers grounded in live enterprise data. 95% accuracy. |

Scale & maintenance | High engineering overhead to maintain endpoints | Extends naturally as new data sources are added |

Coverage | Limited to predefined MCP tools | Covers the full enterprise data environment |

Capabilities | Simple lookups | Complex analytical workflows and multi-step reasoning |

Latency | P95 more than 30 seconds | P95 are 10 seconds |

Cost | MCP overheads inflate cost | 30% reduction in tokens per request |

Summary

MCPs were introduced to make AI integration modular and safe. They are good for integrating with software services, but are a dead end for conversational analytics at enterprise scale.

They introduce middleware where understanding is required, abstraction where precision is needed, and orchestration where direct reasoning would suffice.

Talking to data is fundamentally different from calling tools. Enterprise intelligence demands systems that understand business context, execute deterministically, and learn continuously from real interactions.

The future of text-to-SQL and conversational analytics will not be defined by more wrappers or smarter prompts. If enterprises truly want AI that understands their business, the path forward is clear:

Stop wrapping data. Start talking to it.

FAQs

Why are MCPs unsuitable for enterprise analytics?

MCPs restrict data access through predefined tools, forcing AI systems to interpret large intermediate outputs rather than reasoning directly over databases. This results in latency, higher costs, and unreliable execution for complex analytical queries.

Are MCPs completely useless?

No. MCPs work well for controlled workflows and predefined actions. However, they struggle with exploratory analytics and long-tail enterprise questions that require flexible reasoning across multiple datasets.

What does “talking directly to data” mean?

It means allowing AI systems to query underlying databases and knowledge sources directly, while respecting enterprise security controls, instead of routing access through middleware wrappers. For structured databases like SQL, this means querying with text to sql. For unstructured databases that means retrieval architectures like RAG, but with multi-modal understanding and enterprise knowledge graph.

Why do enterprise questions fail more often than demos?

Real users ask unpredictable, multi-step questions that involve business logic and cross-system dependencies. Most enterprise implementations rely on MCP-based systems for data access. These systems are limited by MCP coverage and fail when users need open-ended exploration.

How did Genloop improve success rates?

By eliminating MCP middleware, building unified enterprise memory, and enabling direct execution against live data systems, Genloop converted exploratory reasoning into deterministic analytical execution. Built on top of its strong foundation of memory, retrieval, execution, and learning, this approach achieved a 95%+ success rate.

Is this the future of text-to-SQL?

Yes. The next generation of text-to-SQL systems will focus on data-native architectures that combine reasoning, execution, and business memory; rather than relying on tool orchestration layers. This is why Genloop ranks number one on text-to-SQL industry benchmarks like Spider2 with a score of 96.7%.