OpenClaw (formerly known as ClawdBot or Moltbot) is a viral, open-source AI agent framework created by software engineer Peter Steinberger. It has gained a massive following among developers and data teams because, unlike standard SaaS chatbots, OpenClaw acts as an always-on agent released in open source. Operating as an orchestration layer between powerful AI models and your personal data, it allows users to execute complex, autonomous tasks and manage their digital lives directly through everyday messaging apps like WhatsApp or Telegram.

In this guide, we'll walk through what OpenClaw can do for business analytics, how to set it up over common data warehouses, and where it genuinely shines. We'll also be honest about where it falls short — particularly if you're trying to roll it out beyond a single developer or data analyst.

What Can You Do With OpenClaw for Business Analytics?

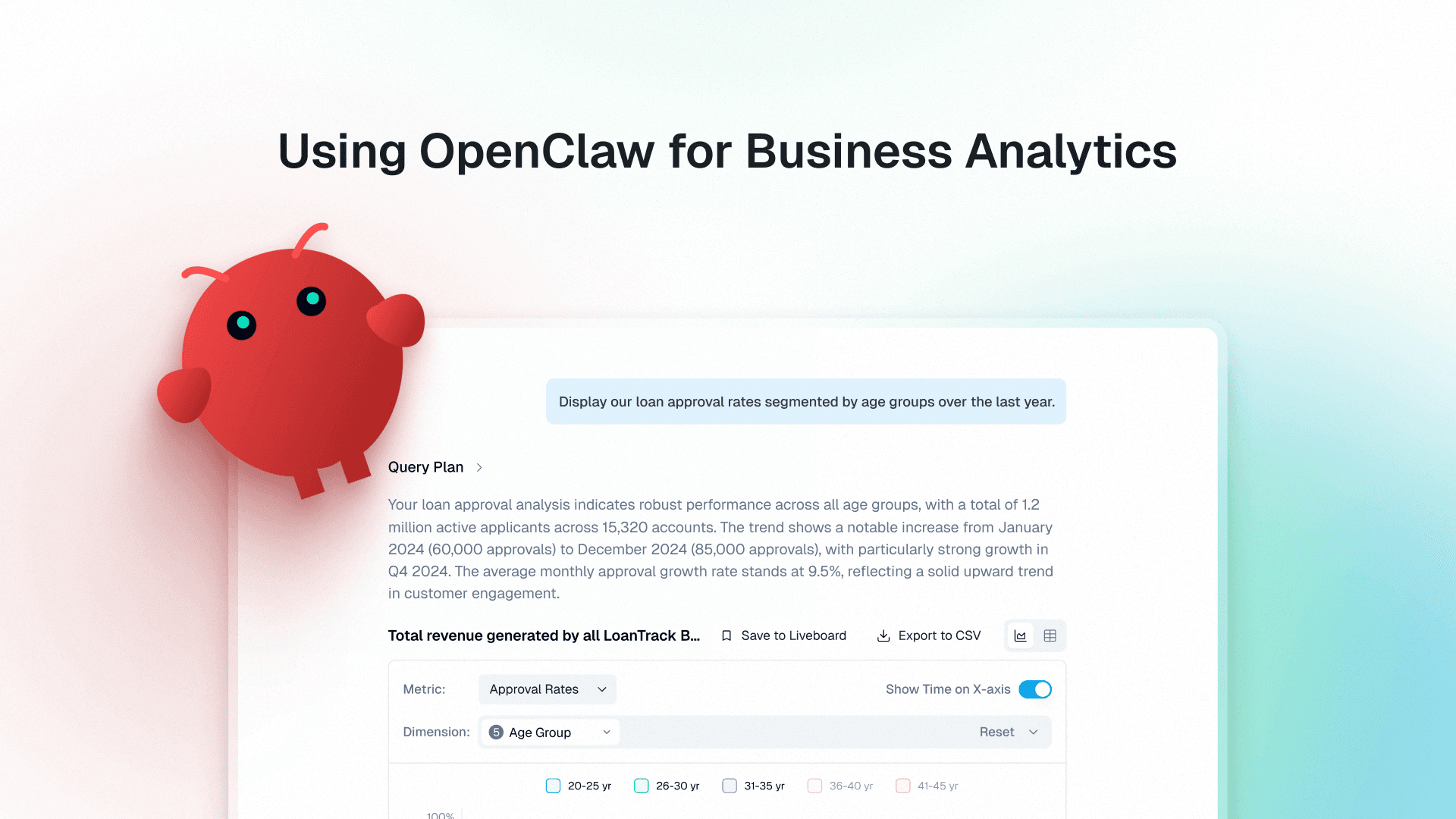

Once you have set up OpenClaw with your databases, developers can use it for natural language data queries. Instead of writing SQL or asking a data analyst to pull a report, when correctly set up, a developer can ask the business question to OpenClaw, which translates that into SQL, runs it against the connected database, and returns results with a story.

These are the different sectors that could benefit.

1. Financial Analysis

Finance teams can use OpenClaw to speed up variance analysis, budget vs. actuals, and ad-hoc P&L breakdowns:

"Compare operating expenses this quarter to the same quarter last year."

"Which cost centers exceeded budget by more than 10%?"

"What is the revenue impact of the top 5 customer churns this month?"

2. Product Analytics

Product managers can use OpenClaw to answer questions about feature adoption, funnel conversion, and retention without waiting for engineering:

"What percentage of users activated the new onboarding flow in the last 30 days?"

"How does retention differ between users who completed setup versus those who skipped it?"

"Which features are used most by our top 20% of accounts?"

3. Sales Analysis

Sales leaders and RevOps teams use it for pipeline analysis, rep performance, and forecasting questions:

"Which deals have been in the 'proposal' stage for more than 30 days?"

"What is the average sales cycle by industry segment?"

"How much of Q2 quota is covered by current pipeline?"

4. Marketing Analysis

Marketing teams can use OpenClaw for performance reporting, channel attribution exploration, and rapid segmentation — especially when the data is already clean and modeled in a warehouse:

"Which acquisition channels drove the highest LTV cohorts in the last 90 days?"

"What was the CAC payback period by channel and campaign for Q1?"

"Show me week-over-week conversion rate changes for the pricing page, split by traffic source."

5. Customer Success / Support Analytics

CS and support leaders can use OpenClaw to connect product usage, support tickets, and renewal outcomes to identify churn risk and prioritize interventions:

"Which accounts had a spike in high-severity tickets in the last 14 days?"

"What product actions correlate most with renewal for customers over $50k ARR?"

"List accounts with declining weekly active usage and increasing support volume."

How to Set Up OpenClaw Over Your Data Warehouse

To get the most out of OpenClaw as an autonomous data analyst, you need to deploy it properly. Because OpenClaw is an always-on agentic system with powerful local execution capabilities, do not install it directly on your personal laptop. For security, sandboxing, and 24/7 uptime, host it on a dedicated cloud VPS (like AWS, DigitalOcean, or Hostinger) or an isolated machine like a Mac Mini.

1. Basic Deployment & Setup

The most secure and common deployment method is via Docker, which sandboxes the agent and prevents it from accessing unauthorized system files.

Official Repository: openclaw/openclaw

Step 1: Run the Docker Container

Pull the image and run the container. Mount a local volume so OpenClaw's memory persists across restarts.

OpenClaw's web UI will be available at http://localhost:18789.

Step 2: Configure Workspace & Memory

Unlike traditional BI tools, OpenClaw's architecture relies on plain text Markdown files for its memory system, stored in your mounted ~/.openclaw directory.

SOUL.md: Defines the agent's identity and boundaries. Explicitly instruct the agent here to act as a read-only data analyst.MEMORY.md: Where OpenClaw automatically logs its context, schema discoveries, and long-term memory.memory/YYYY-MM-DD.md: Daily activity logs written automatically by the agent.

2. Connecting Your Data Warehouse

OpenClaw connects to databases via MCP servers (Model Context Protocol) — lightweight processes that expose your data warehouse as tools the agent can call. You configure them in ~/.openclaw/openclaw.json under the mcpServers key.

Security: Always use database credentials with read-only permissions. Autonomous agents can execute commands unpredictably; preventing write access protects you from accidental data modification.

Over PostgreSQL and MySQL (via DBHub)

DBHub is a zero-dependency MCP server that supports PostgreSQL, MySQL, MariaDB, SQLite, and SQL Server with a single npm command.

For MySQL, swap the DSN scheme: mysql://user:password@host:3306/dbname. No separate install needed — npx handles it.

Over BigQuery

Use the community @ergut/mcp-bigquery-server package. You will need a GCP service account JSON key with BigQuery read permissions.

Best for: teams already running analytics workloads on GCP.

Over Databricks

Clone and install the community Python MCP server, then configure it:

Works with Unity Catalog and legacy Hive Metastore tables. Best for teams using the Lakehouse architecture with Delta tables.

Over Snowflake

Snowflake Labs maintains an official MCP server. Install uv first (pip install uv), then configure:

The server enforces the RBAC permissions of the specified role. Best for enterprise data teams with centralized Snowflake environments.

Over Amazon Redshift

Redshift is Postgres-compatible. Route it through DBHub on port 5439:

Where OpenClaw Actually Works Well

It would be dishonest to say OpenClaw has no value. For the right use case, it does the job exceptionally well:

Personal developer use: A single engineer exploring a new dataset doesn't need governance infrastructure. OpenClaw gets answers fast.

Proof-of-concept demos: If you want to show stakeholders what natural language querying looks like, OpenClaw is a quick way to demo the concept.

Low-stakes ad-hoc analysis: For internal experiments where accuracy is checked manually, it's a reasonable starting point.

Self-hosted, air-gapped environments: Teams in highly regulated sectors who require completely on-prem deployments and possess the engineering bandwidth to manage the technical overhead.

The Real Limitations of OpenClaw for Business Analytics

Here is where the honest conversation begins. OpenClaw was built as a personal assistant, and it behaves like one. When you try to use it across a team, at scale, or for decisions that actually matter, the gaps become significant.

1. It Has No Understanding of Your Business

OpenClaw reads your database schema: table names, column names, data types. That is all it knows.

It does not know that your company defines "active customer" as someone with a login in the last 90 days. It does not know that "revenue" in one table means gross, and in another, it means net.

The result: It produces answers that are technically correct SQL but operationally wrong. Unless you know enough SQL to audit the query, you will not catch the error.

2. No Governance, No Access Control

OpenClaw has no concept of who should see what. Connect it to your database, and any user can query any table — customer PII, financial data, HR records. There is no role-based access control, no row-level security enforcement, and no audit trail. For an enterprise rolling it out to 50 business users, this is a massive liability.

3. No Audit Trail or Compliance Controls

Regulated industries need to know exactly what data was accessed, by whom, and when. OpenClaw produces no audit logs. In financial services, healthcare, or any SOX/GDPR-regulated environment, this is a blocker.

4. Answers Are Not Validated

When OpenClaw returns an answer, there is no mechanism for a domain expert to review it, flag it as incorrect, or mark it as verified. Every answer comes with the same implicit confidence.

5. It Does Not Scale Beyond a Single User

OpenClaw can work well for a single developer exploring a dataset, but it breaks down when you try to roll it out across a team. It lacks the organizational layer needed for shared analytics: consistent definitions, shared workflows, and feedback loops.

6. No Collaboration Layer

OpenClaw is essentially a one-to-one interface between a person and a database. There is no native way to share a query and its context as a reusable, team-facing artifact. There is no built-in way to collaborate on interpretations, add commentary, and reach agreement. There is also no way to route results for review or verification by data experts or to turn answers into standardized deliverables like recurring reports, dashboards, or presentation templates.

7. No Expert-in-the-Loop Learning

In real organizations, data experts define and maintain the "source of truth." OpenClaw does not provide a structured way to capture and enforce that expertise over time. It cannot centralize metric definitions and business rules and then apply them consistently for everyone. It does not learn from corrections, so the same mistakes repeat across users. Without a governance layer, different teams create their own interpretations, and trust in the data quickly erodes.

Genloop: Everything OpenClaw Offers — And Everything It Doesn't

If what you need is a personal assistant for your own database exploration, OpenClaw gets the job done. But if you need an AI analytics platform your whole business can trust — one that understands your business, governs who sees what, and helps your team move from insight to action — that is a fundamentally different product.

That is what Genloop is built for.

Governed and Trusted by Design

Genloop is built for teams, not individual developers. It enforces role-based access control so finance teams only see finance data and sales teams only see sales data. Every query is logged so compliance and security teams have a clear audit trail.

You define metrics, KPIs, business rules, and terminology once, and Genloop applies them consistently across every answer.

Multi-Modal Unified Analytics

Genloop's agentic architecture can pull data from multiple databases, fuse it with supporting unstructured information, and give deep and complete analysis.

Accurate Answers, Not Just Fast Ones

Genloop is benchmarked on Spider2, a rigorous text-to-SQL benchmark for enterprise data. Results go through validation before they reach business users. Domain experts can review, approve, and flag answers, and the system learns from corrections.

Understands Your Business Context

Genloop models organizational context, data context, and domain definitions. When someone asks for "top customers," it knows what "top" means for your business and whether "customer" refers to an individual or an account. When someone asks for a "revenue projection," it can follow your agreed calculation logic and account for known exceptions such as special discounts.

Proactive, Not Reactive

Genloop surfaces relevant insights automatically, including anomalies, emerging trends, and open items, so teams do not have to remember which dashboards to check.

Workflows for Review and Brainstorming

Genloop supports expert-in-the-loop review workflows. When an answer is uncertain or high impact, business users can request review from a data expert and proceed with confidence.

Scheduled and Automated Workflows

Schedule recurring analyses for individuals or teams. Send a Monday pipeline review automatically, or trigger alerts and suggested next actions when thresholds are breached.

Auditable, Traceable Answers

Each investigation includes the underlying SQL or code, source context, and supporting evidence so results can be audited.

Visualization and Data Storytelling

Genloop turns investigations into clear narratives and visualizations that can be sliced and shared. Charts can be exported for broader collaboration.

Drives Action (With Guardrails)

Genloop can propose and create action tickets with the right approval steps and respect for access and authority.

Performance Tracked, Outcomes Mapped

Genloop tracks insights and recommended actions and ties them back to outcomes, helping leadership see whether analytics is driving decisions.

Continuous Learning

Genloop improves over time by incorporating validated feedback into an organizational memory, with data-expert review before anything becomes part of the source of truth.

OpenClaw vs. Genloop: At a Glance

Capability | OpenClaw | Genloop |

|---|---|---|

Natural language queries (text → SQL) | ✓ | ✓ |

Warehouse support (BigQuery / Snowflake / Databricks / Redshift / Postgres) | ✓ | ✓ |

Unified Multi-Modal Analytics | ✗ | ✓ |

Business definitions (metrics, KPIs, "active customer", revenue logic) | ✗ (schema-only) | ✓ (definitions enforced) |

Access control (RBAC, row/column security, least-privilege) | ✗ | ✓ |

Audit trail (who queried what, when) + compliance readiness | ✗ | ✓ |

Accuracy guardrails (validation, test cases, deterministic checks) | ✗ | ✓ |

Expert review workflow (SME approval, flagging, corrections) | ✗ | ✓ |

Collaboration layer (share investigations, commentary, reuse) | ✗ (1:1 assistant) | ✓ (team workflows) |

Proactive analytics (alerts, anomalies, reminders) | ✗ | ✓ |

Automation (scheduled runs, recurring digests, triggers) | ✗ | ✓ |

Outcome tracking (insight → action → impact) | ✗ | ✓ |

Learning from feedback (organizational memory, consistency over time) | ✗ | ✓ (governed learning) |

Enterprise deployment (multi-user, controls, scale) | Limited (personal tool) | ✓ |

Who Should Use OpenClaw vs. Genloop?

OpenClaw is right for you if:

You are a solo developer or analyst exploring data for personal use.

You want to prototype or demo conversational analytics quickly.

You need a lightweight, self-hosted tool with no SaaS dependencies.

Governance, accuracy validation, and compliance are not requirements.

Genloop is right for you if:

You need analytics your whole team — including non-technical business users — can use and trust.

You operate in a regulated industry or need compliance controls.

You want analytics that leads to action, not just answers.

You need the system to learn your business and improve over time.

You want to close the loop between insights and outcomes.

Getting Started With Genloop

Genloop connects to your existing data stack, learns your business definitions, respects your access controls, and gives every user in your organization a trusted, governed AI analyst.

If you have been using OpenClaw and are ready to move from personal experiments to enterprise-grade analytics, start with a demo.