Generative AI is reshaping business intelligence, and Databricks Genie is one platform driving this shift. For enterprises that have consolidated their data on Databricks, Genie enables natural language querying through Unity Catalog. But enterprise adoption has revealed where this approach breaks down.

Most organizations operate across multiple data warehouses, not just Databricks. Business metrics like churn rate or CAC are defined differently across teams, regions, and source systems. When an analytics platform cannot reconcile these definitions or query across environments, it generates technically correct SQL that maps to the wrong business logic. This blog evaluates where Genie's architectural constraints create gaps in enterprise agentic analytics and which platforms are built to close them.

Where Databricks Genie Stops

Genie translates natural language to SQL and executes queries against Unity Catalog. If data is already in Databricks and schemas reflect business logic, Genie is fast and practical.

But these architectural limitations surface in enterprise environments:

Unity Catalog dependency creates vendor lock-in. Genie only queries data registered in Unity Catalog. Organizations with CRM data in Salesforce, financial data in Snowflake, and product telemetry in BigQuery cannot ask cross-source questions without manual ETL consolidation into Databricks first.

Stateless query execution without business context. Genie treats each query as independent. It does not learn company-specific terminology or enforce consistent metric definitions across queries. One team's revenue calculation includes refunds, another's does not. Genie returns both as equally valid SQL.

Query throttling limits exploratory analysis. Genie caps throughput at 20 queries per minute per workspace. For teams running exploratory queries during root-cause investigation, this becomes a bottleneck.

Governance without semantic mapping. Genie enforces row-level and column-level security at the database layer but has no semantic layer to map business terminology to governed calculation logic across different business units.

No multi-step reasoning or root-cause workflows. Genie translates natural language to SQL. It does not identify root causes, or chain queries into multi-step investigations.

For teams already centralized in Databricks with clean schemas and no multi-source federation requirements, Genie is practical. For enterprise environments with distributed data, inconsistent metric definitions, and complex governance needs, it is the first step, not the full solution.

What Enterprise Analytics Requires

This section outlines the dimensions required for a conversational analytics platform to deliver business answers, not just syntactically correct SQL.

Semantic Layer Quality: Whether the platform maps company-specific terminology to governed business logic across sources, or generates queries that measure the wrong calculation despite syntactically correct SQL.

Multi-Source Federation: Whether it unifies data across Snowflake, Postgres, BigQuery, and data lakes at query time, or requires ETL pipelines and data consolidation first.

Business Context Preservation: Whether it explains why metrics moved and suggests next investigative steps, or returns numbers without reasoning.

Governance and Security Depth: Whether it supports row-level RBAC, SOC 2 compliance, air-gapped deployment, and deterministic execution with audit trails.

Native Warehouse Execution: Whether a platform runs queries directly inside a single warehouse engine or analyzes data across connected sources.

Cost Structure: Whether pricing scales predictably with data sources and query volume, or escalates with per-user licensing and compute consumption.

Platforms That Close the Gap

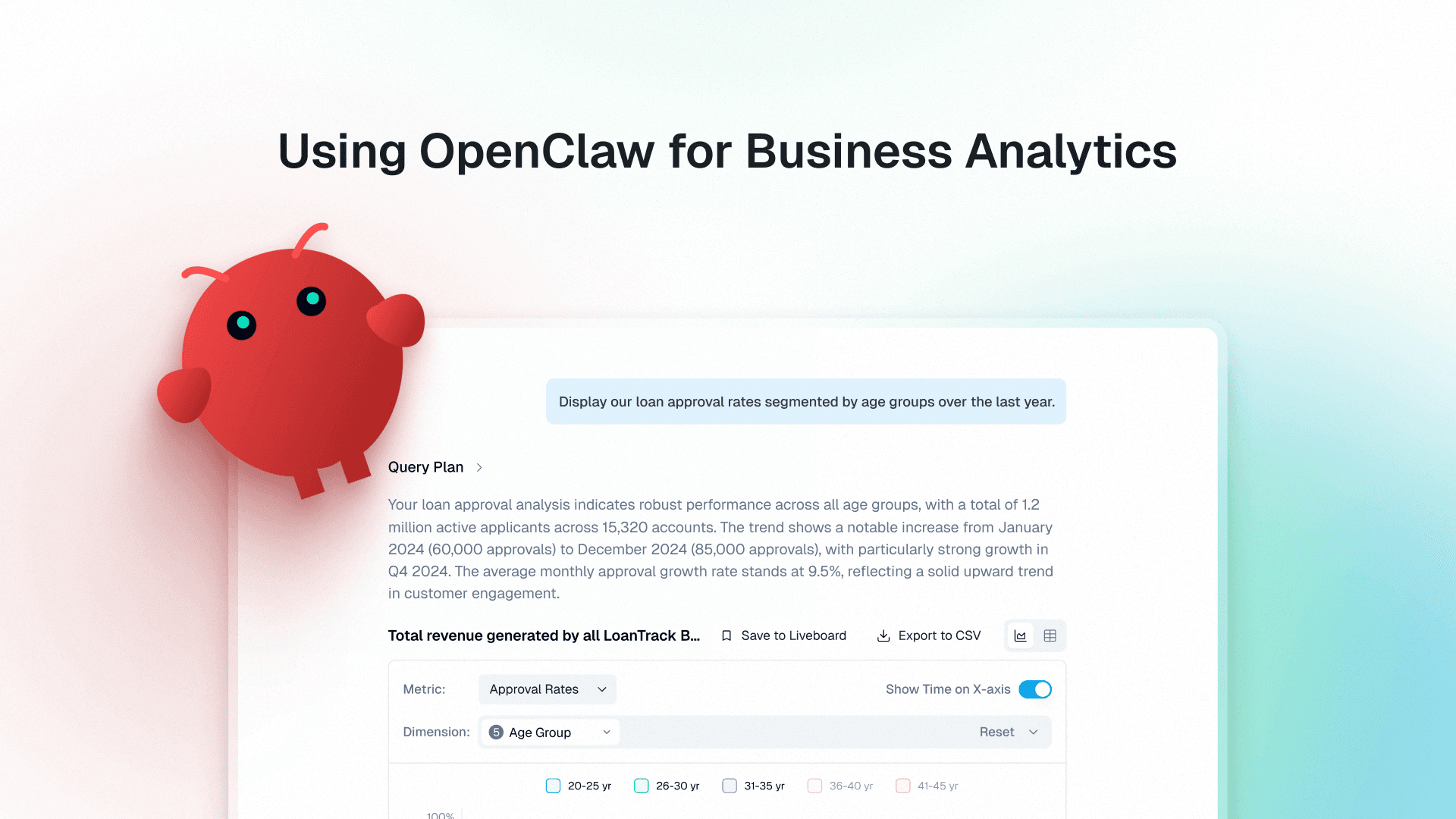

1. Genloop

Best for: Enterprise data teams with distributed data sources who need non-technical teams to ask ad-hoc questions without building dashboards or waiting for data engineering tickets

Genloop is an agentic analytics platform built for enterprise data environments. It federates across Snowflake, Postgres, BigQuery, and data lakes through a unified semantic layer called Unified Business Memory, which maps company-specific terminology to governed business logic. A finance analyst can ask "Why did customer acquisition cost spike in APAC last month?" in plain language and receive a governed answer with root-cause analysis and suggested next investigative steps without creating a data team ticket.

Dimension | How Genloop performs |

|---|---|

Semantic Layer Quality | Unified Business Memory maps company-specific terminology to governed business logic across multiple sources with human-in-the-loop validation that catches errors before propagation. |

Multi-Source Federation | Connects directly to Snowflake, Postgres, BigQuery, and more |

Business Context Preservation | Agentic workflows deliver multi-step analysis, root-cause identification, and suggested next actions that explain why metrics moved beyond returning changed values. |

Governance and Security Depth | SOC 2 Type II and ISO 27001 certified with row-level and column-level RBAC enforced at query time, air-gapped deployment support, and deterministic execution with full audit trails. |

Native Warehouse Execution | Analyzes data across connected sources through a unified semantic layer. |

Cost Structure | Optimized token economics via smaller models focused on analytics workflows (designed to keep per-question cost predictable at scale). |

2. Snowflake Cortex Analyst

Best for: Organizations centralized on Snowflake with well-governed schemas who need native conversational analytics without data movement

Snowflake Cortex Analyst runs natively inside Snowflake, converting natural language to SQL without data replication. It uses Snowflake's semantic layer (Iceberg tables, dynamic tables, and metadata) to ground queries in available schema structure.

The constraint is Snowflake lock-in. Organizations using BigQuery, Redshift, or PostgreSQL cannot query those sources without replicating data into Snowflake first. Cortex Analyst does not support multi-step reasoning, agentic workflows, or unstructured data analysis. Semantic layer quality depends entirely on Snowflake metadata governance practices.

Dimension | How Snowflake Cortex Analyst performs |

|---|---|

Semantic Layer Quality | Schema-based semantic reasoning that generates accurate SQL when Snowflake metadata is well-governed, but cannot map business terminology that exists outside database schema definitions. |

Multi-Source Federation | Locked to Snowflake with limited external table support that requires data replication for effective cross-source analysis. |

Business Context Preservation | Generates query results without multi-step reasoning, root-cause identification, or suggested next investigative actions. |

Governance and Security Depth | Strong native Snowflake governance with row-level security, column masking, and role-based access control enforced through Snowflake's security model. |

Native Warehouse Execution | Runs natively inside Snowflake, executing queries directly through the Snowflake warehouse engine |

Cost Structure | Pricing tied to Snowflake compute consumption with always-on warehouse costs that scale linearly with query volume. |

3. ThoughtSpot

Best for: Organizations that prefer search-based BI exploration with curated semantic models.

ThoughtSpot uses a search-first interface combined with a semantic layer (ThoughtSpot Semantic Model) to enable business users to explore data without SQL knowledge. It connects to multiple data sources through live connectors and caches results in its semantic layer.

The trade-off is manual semantic curation and cost. The semantic layer requires upfront definition of metrics, dimensions, and relationships in ThoughtSpot's interface before analysis becomes effective. Query performance depends on connector latency and semantic layer design. The semantic model is proprietary, creating vendor lock-in.

Dimension | How ThoughtSpot performs |

|---|---|

Semantic Layer Quality | Mature semantic model that supports complex business logic, hierarchies, and calculated fields when fully curated, but requires manual definition and ongoing maintenance. |

Multi-Source Federation | Supports multiple sources through connectors but requires data centralization into ThoughtSpot's backend for effective performance, not true federation without data movement. |

Business Context Preservation | Returns answers with drill-down exploration capabilities but limited multi-step analysis beyond what analysts build into the semantic model. |

Governance and Security Depth | Row-level and column-level RBAC with audit trails and compliance certifications for mature governance controls. |

Native Warehouse Execution | Works through connectors and semantic caching rather than running directly inside a specific warehouse ecosystem |

Cost Structure | Per-user licensing that scales with data volume and user count, creating escalating costs for large analytical teams. |

4. Microsoft Power BI Copilot

Best for: Organizations deeply invested in the Microsoft ecosystem who need conversational analytics within existing Power BI semantic models

Power BI Copilot embeds conversational analytics into Power BI's semantic model layer. It translates natural language to DAX (Data Analysis Expressions) and executes queries against Power BI datasets.

The constraint is Power BI lock-in. Organizations with data outside Power BI must replicate it first. DAX is less expressive than SQL for complex analytical workflows. Semantic layer quality depends entirely on Power BI model design. Query accuracy suffers when business terminology does not map cleanly to DAX measures.

Dimension | How Power BI Copilot performs |

|---|---|

Semantic Layer Quality | DAX-based semantic layer that depends entirely on Power BI model design quality, with limited expressiveness for complex analytical logic compared to SQL-based platforms. |

Multi-Source Federation | Locked to Power BI datasets with data replication required for sources outside the Power BI environment. |

Business Context Preservation | Returns query results without multi-step reasoning, root-cause workflows, or suggested next investigative actions. |

Governance and Security Depth | Row-level security and RBAC capabilities limited to Power BI's governance model with audit trails available through Microsoft ecosystem. |

Native Warehouse Execution | Runs directly within Power BI datasets and the Microsoft analytics ecosystem |

Cost Structure | Per-user licensing through Microsoft 365 or Power BI Premium capacity pricing tied to dataset size and refresh frequency. |

Where Each Platform Works Best

The scenarios below highlight where each platform delivers the most value.

Snowflake-centric organizations: If you've consolidated around Snowflake, product analytics, finance data, and customer metrics in a single warehouse, Snowflake Cortex Analyst executes queries directly in-warehouse without additional infrastructure.

Distributed enterprise data and agentic analytics workflows: Many SaaS companies operate with CRM data in Salesforce, product telemetry in BigQuery, finance in Snowflake, and operational data in Postgres. Platforms like Genloop enable teams to analyze metrics across these systems without requiring centralization and support agentic workflows that help investigate why metrics changed.

Search-driven BI exploration: Organizations that prefer search-based analytics and dashboard exploration often adopt ThoughtSpot. Business users can type queries into a search interface to explore curated datasets and drill into dashboards without writing SQL.

Microsoft analytics ecosystems: Organizations running Power BI, Microsoft Fabric, and Azure Synapse get the most leverage from Power BI Copilot. Conversational analytics runs directly within existing Power BI semantic models, preserving governance and deployment patterns.

How to Choose

Different platforms address different parts of the enterprise conversational analytics problem. Some focus on native warehouse performance, others prioritize semantic governance, while some platforms combine multi-source federation with automated investigation workflows.

Platform | Semantic Layer Quality | Multi-Source Federation | Business Context Preservation | Governance and Security Depth | Native Warehouse Execution | Cost Structure |

|---|---|---|---|---|---|---|

Genloop | ✓ | ✓ | ✓ | ✓ | ◐ | ✓ |

Snowflake Cortex Analyst | ◐ | ◐ | ◐ | ✓ | ✓ | ✓ |

ThoughtSpot | ✓ | ◐ | ◐ | ✓ | ◐ | ✗ |

Power BI Copilot | ◐ | ✗ | ◐ | ✓ | ✓ | ◐ |

FAQs

How does Genloop's Unified Business Memory differ from traditional semantic layers?

Traditional semantic layers like Looker's LookML or Power BI's DAX require technical expertise to build and maintain through code. Unified Business Memory is business-first: metric definitions, dimensions, and relationships are defined in plain language and Genloop translates them into executable governed semantics. It improves through human-in-the-loop validation and confidence scoring as teams use the platform.

What should teams evaluate when choosing alternatives to Databricks Genie?

Key factors include data source connectivity, semantic modeling, governance controls, and how reliably the platform converts natural language into analytical queries. The best option depends on whether an organization’s data stack is centralized or distributed across multiple systems.

How do platforms like Genloop handle business metrics and definitions?

Accurate analytics depends on consistent metric definitions. Platforms like Genloop use a semantic layer called Unified Business Memory to map business terms such as revenue or churn to the correct data definitions across connected sources.

Can conversational analytics platforms query data across multiple warehouses?

Capabilities vary. Some solutions are optimized for a single warehouse ecosystem, while others support connections to multiple databases and warehouses. This distinction is important for organizations with distributed data environments.

How do pricing models differ across agentic analytics platforms?

Pricing typically follows either per-user licensing or usage-based models. Platforms like Genloop use usage-based pricing tied to query volume and connected data sources rather than the number of users.