Business intelligence has traditionally relied on static dashboards and analyst-driven exploration. A leader asks a question. An analyst writes queries, builds charts, and returns with an answer. The model works, but it struggles to keep pace as data environments grow more complex.

2026 marks a turning point. Agentic data analysis is moving from pilot programs to production adoption across enterprises. AI agents can now plan multi-step analysis, run queries across various data sources, interpret results, and continue investigating without requiring a new prompt for every step, fundamentally changing how organizations extract value from data.

This shift changes how analytics platforms are evaluated. The question is no longer which tool builds the best dashboard. It is which systems can reliably translate natural language questions into governed enterprise data logic and perform multi-step agentic workflows at scale. This blog helps business leaders evaluate the leading platforms and make informed decisions for their organization's analytics future.

TLDR

This blog evaluates seven platforms based on the architectural capabilities that determine whether agentic analytics succeeds in production environments:

Quality — The platform's capabilities, ability to unify different data sources into one interface, and depth of analytics it supports.

Reliability — Whether it delivers consistently accurate answers or frequently hallucinates.

Trust — Whether it includes a governance layer. Example: RBAC, RLS, CLS. Security posturing and deployment flexiblity.

Scale — Whether it can scale to all users and analytics use cases across the organization.

Cost — Pricing and token economics.

The platforms covered include Genloop, ThoughtSpot Spotter, Databricks Genie, Microsoft Fabric, Sigma Computing, Domo, and Snowflake Cortex.

What Agentic Data Analysis Actually Means

Agentic data analysis is not just a smarter dashboard, it represents a fundamentally different execution model.

Traditional BI tools provide a click-based interface where users select filters or navigate predefined views to access data. Agentic data analytics systems, by contrast, offer a conversational interface where business users can ask questions or trigger data actions in natural language. These systems must combine the convenience of conversational analytics with the rigor and governance of enterprise BI platforms.

In production environments, this autonomy relies on four core architectural components:

Data source connectivity and federation: The platform's ability to connect to multiple data sources and perform federated analytics without requiring data copies or movement.

Context graph sophistication: The depth and completeness of the semantic layer, including how much manual effort is required to build and maintain business context.

Agent reasoning capability: The quality of the reasoning engine that operates on top of the context graph to plan and execute multi-step analysis.

Continuous learning: Whether the system learns from user interactions and improves over time, rather than treating each session as stateless.

Most platforms claim agentic capability, but what they mean varies significantly. Some have simply added a chat interface on top of an existing semantic model or dashboard. Others have built sophisticated reasoning loops but left the semantic layer shallow or incomplete. Only a few platforms offer genuine business memory—systems that accumulate context across interactions and sessions rather than starting fresh each time.

Top 7 Agentic Data Analytics tools in 2026

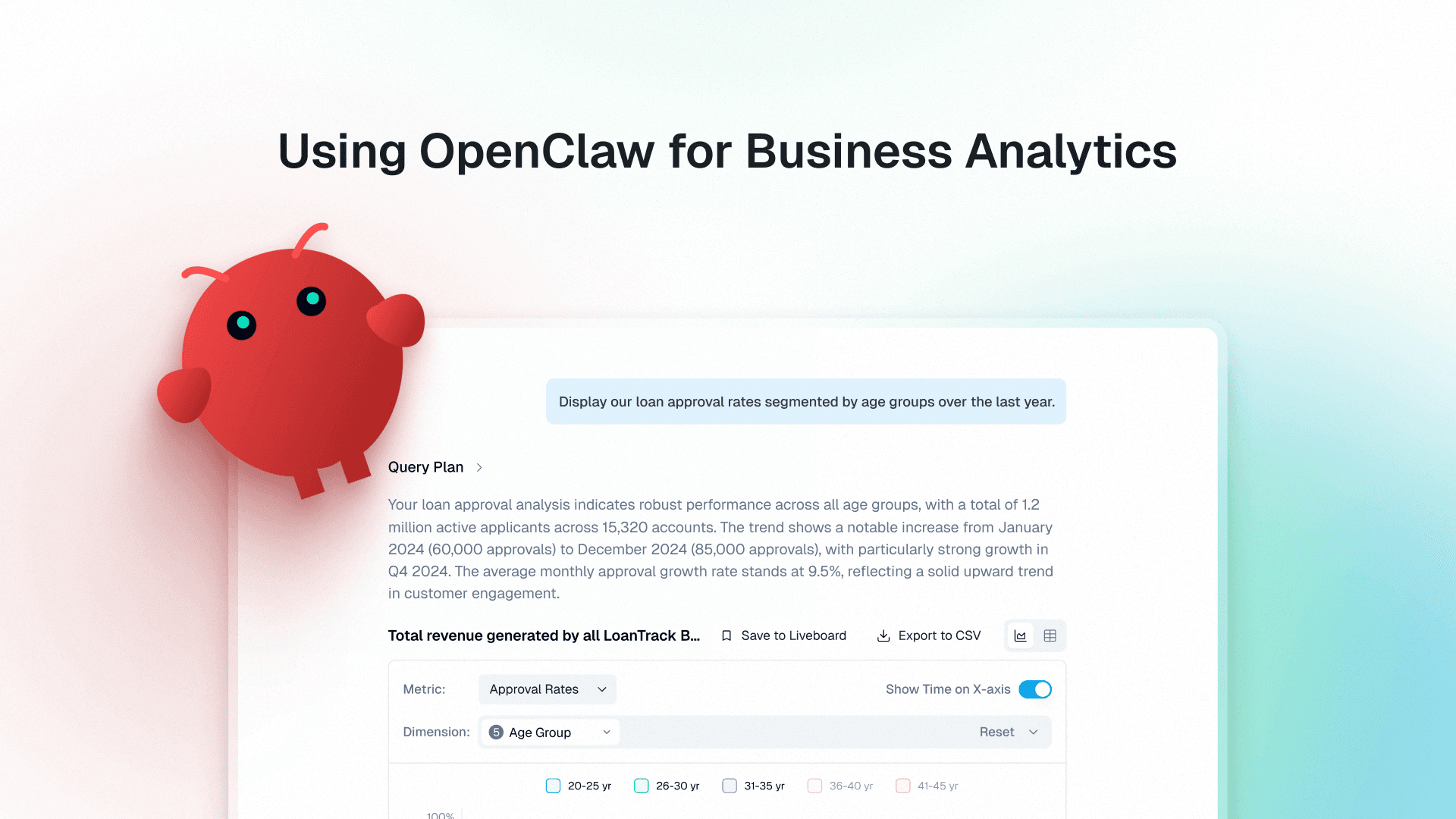

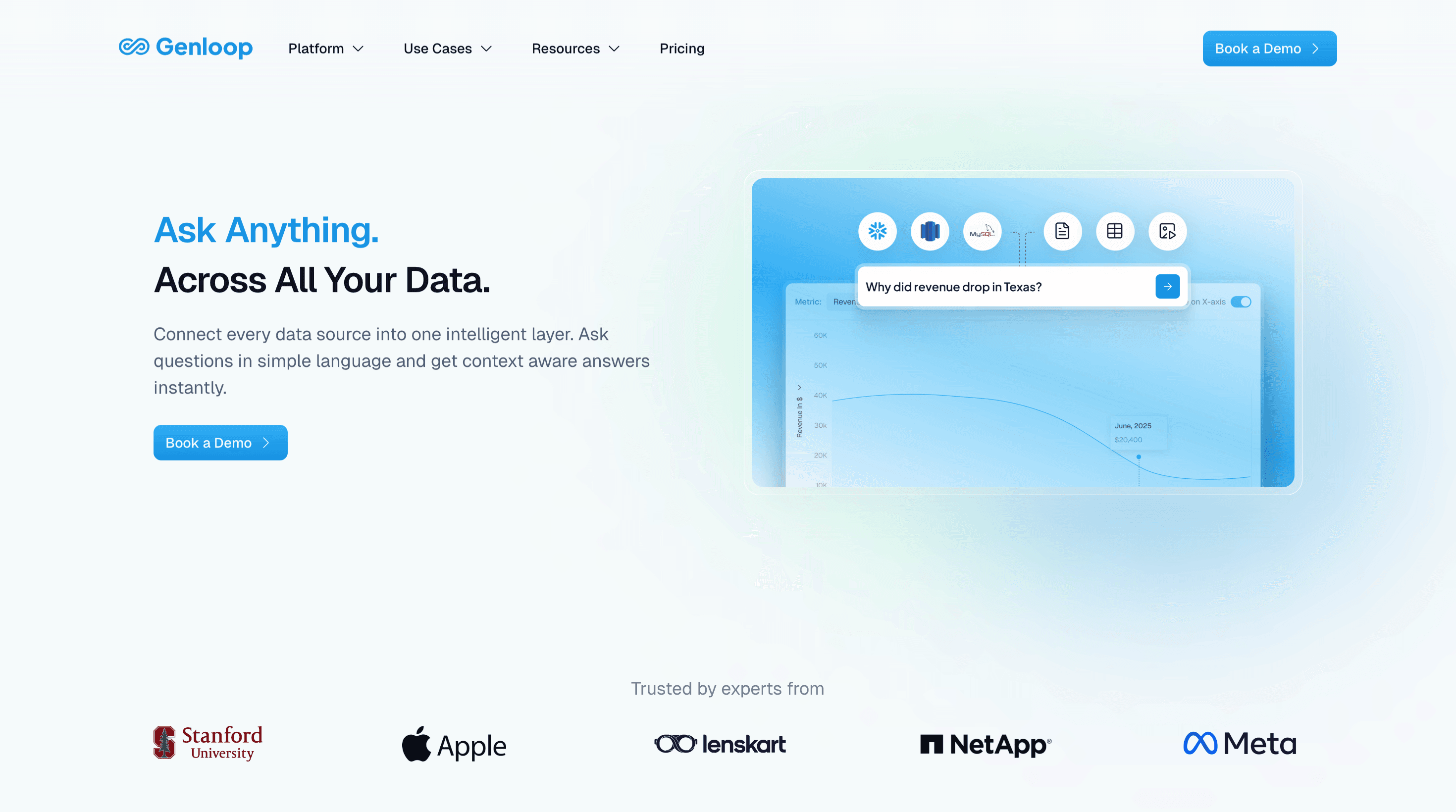

1. Genloop

Best for: Enterprise teams that need quality unified analytics with memory, governance, and reliable execution

Genloop is the most accurate data reasoning platform available, scoring 96.7% on rigorous benchmarks like Spider 2. It is the only platform that builds a multi-modal context graph of the enterprise and continuously refines it with usage.

Dimensions:

Dimension | How Genloop performs |

|---|---|

Quality | Deep reasoning insights including prescriptive analysis and decision automation. Supports specialized agents for analysis and deep-dive actions. |

Reliability | Distilled models + proprietary retrieval. Includes a reliability engine that flags potentially uncertain answers and supports validation workflows. |

Trust | Governance, decision traceability, and access-controlled execution paths designed for enterprise environments. |

Scale | Builds a comprehensive understanding of the enterprise with minimal ongoing human maintenance; designed for broad coverage including long-tail questions. |

Cost | Optimized token economics via smaller models focused on analytics workflows (designed to keep per-question cost predictable at scale). |

Coverage | Handles edge cases and tail queries that other platforms often fail on. |

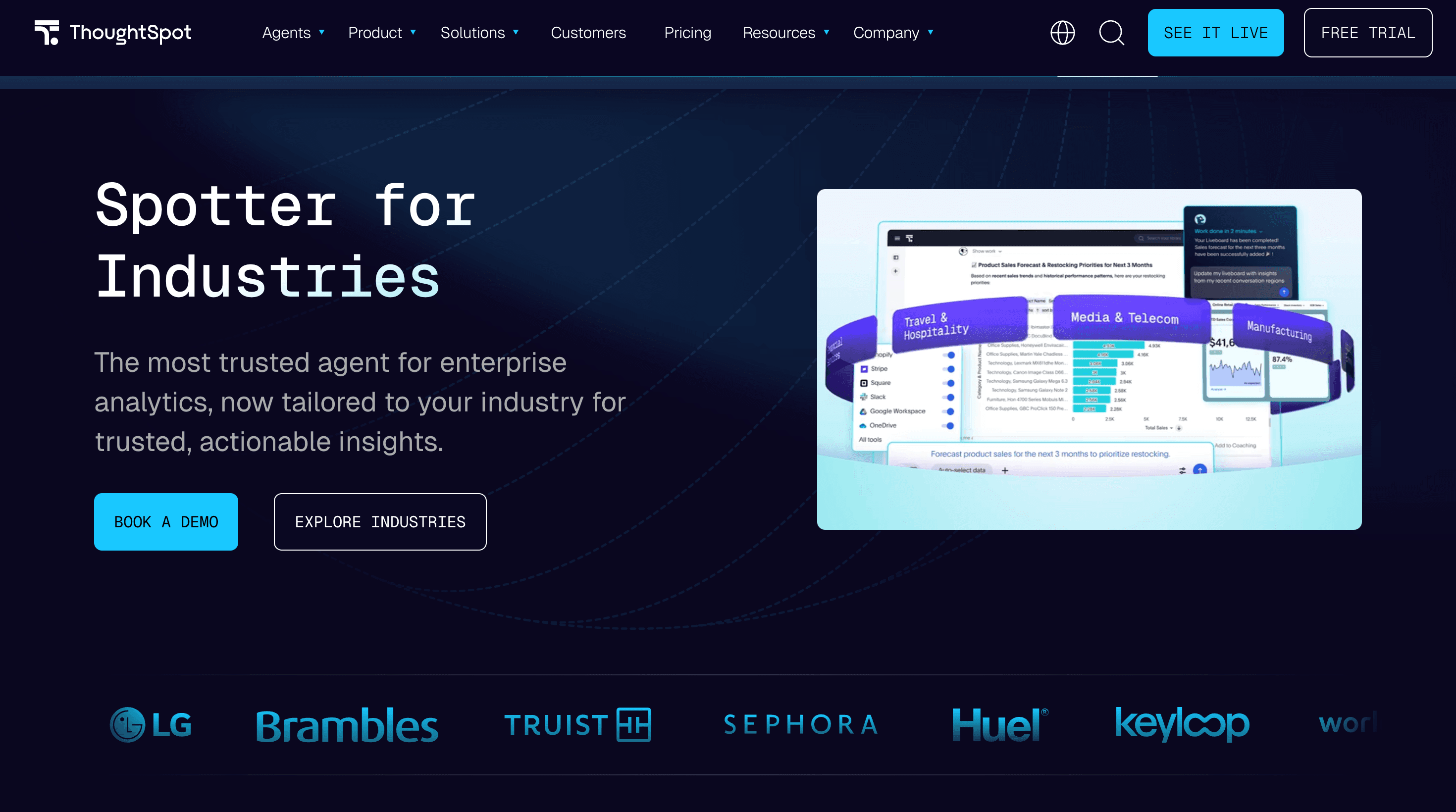

2. ThoughtSpot Spotter

Best for: Search-driven enterprise BI with governed semantic context

Spotter is an agent built on ThoughtSpot’s semantic layer, aiming to make natural language questions resolve to governed metrics and dimensions rather than raw schema guesses.

Dimensions:

Dimension | How ThoughtSpot Spotter performs |

|---|---|

Quality | Good for search-driven exploration and governed metrics when the semantic model is well-maintained; tends to be less strong on truly multi-step, agent-driven investigation beyond the ThoughtSpot interface. |

Reliability | Can be more deterministic than pure NL2SQL, but reliability is bounded by semantic coverage and ambiguity resolution; edge questions often fall back to best-effort interpretation rather than producing an explicit “not answerable”. |

Trust | Enterprise BI posture with governance patterns centered on metric definitions and controlled semantic mappings. |

Scale | Can scale to many users, but practical scale is gated by the ongoing cost of semantic modeling + metric governance and by how well semantic changes are managed over time. |

Cost | Often expensive at high usage; lower tiers can have query caps, and enterprise tiers typically remove caps at premium pricing. |

Coverage | High coverage for modeled domains; weak on long-tail, cross-domain questions that weren’t explicitly modeled in the semantic layer. |

3. Databricks Genie (and Genie Code)

Best for: Data engineering teams solely on Databricks running the lakehouse stack

Databricks approaches agentic analytics from an engineering-first angle: Genie for business Q&A on governed data; Genie Code for engineers building and maintaining data logic.

Dimensions:

Dimension | How Databricks Genie performs |

|---|---|

Quality | Good for engineering-adjacent analytics, but less purpose-built for business-user conversational analytics. Does not support multi-agent analysis and actions. |

Reliability | Reliability varies widely: with broad permissions, agents can generate fragile logic or take unintended actions; with tight constraints, capability can feel limited for non-technical users. |

Trust | Leverages Unity Catalog governance boundaries; trust is strong when access controls and audit policies are correctly set. |

Scale | Does not build a unified context, and does not have tools to update it continously. Dependent on questions explicity tried or modeled. |

Cost | Cost can climb quickly with heavy autonomous execution because spend is tied to compute + query workloads rather than per-interaction caps. |

Coverage | Broad coverage inside the lakehouse; less compelling outside Databricks, and not always the simplest path for teams that primarily need governed business metrics + answers rather than engineering workflows. |

4. Microsoft Fabric + Copilot

Best for: Microsoft-first enterprises requiring deep M365 integration

Fabric’s differentiator is its integration surface across Microsoft: Power BI, Excel, Teams, and the broader Copilot ecosystem—under shared governance patterns.

Dimensions:

Dimension | How Microsoft Fabric performs |

|---|---|

Quality | Strong for Microsoft-centric orgs, but only offers shallow analytics. Cannot support deep dives and multi-agent execution. |

Reliability | Reliability depends on Power BI model quality and careful configuration across services; cross-product handoffs can introduce silent failures (wrong context, wrong permissions, or inconsistent metric definitions). |

Trust | Strong security primitives, but trust can break if permissions and metric definitions aren’t consistent across Fabric/Power BI/other M365 services; misconfiguration risk is real in multi-agent orchestration. |

Scale | Very strong distribution and adoption potential in Microsoft-standardized orgs; works well for broad business-user rollout. |

Cost | Pricing can be attractive if you already own Microsoft licenses, but total cost becomes opaque across SKUs/capacities and can surprise teams as usage scales. |

Coverage | Excellent within Microsoft stack; limited portability outside of it. |

5. Sigma Computing

Best for: Warehouse-native teams who need governed, transparent exploration

Sigma’s strength is warehouse-native execution: analytics runs directly on Snowflake/Databricks without extracting or copying data.

Dimensions:

Dimension | How Sigma performs |

|---|---|

Quality | Strong for analyst-led exploration and spreadsheet-like modeling; less oriented toward autonomous, end-to-end agent workflows for non-analyst business users. |

Reliability | Execution is reliable because it runs on the warehouse, but “agentic” reliability depends on human review and model hygiene; without that, NL requests can still map to the wrong metric interpretation. |

Trust | Trust inherits from the warehouse governance perimeter; strong when access controls are correctly managed on the underlying platform. |

Scale | Scales well for warehouse-native organizations; adoption depends on analyst enablement and governance setup. |

Cost | Tooling cost can be reasonable, but warehouse compute often dominates at high concurrency; costs rise fast as more users run heavy ad-hoc analysis. |

Coverage | Great for governed exploration; less oriented toward fully autonomous multi-step agentic workflows without additional tooling. |

6. Domo

Best for: Mid-market teams needing integrated pipelines and dashboards

Domo positions as an all-in-one: connectors + transformation + BI in one product, which can simplify deployment for teams that don’t want to assemble a modern stack.

Dimensions:

Dimension | How Domo performs |

|---|---|

Quality | Broad end-to-end coverage (connectors + ETL + dashboards), but depth in each layer can be less than best-of-breed; agentic capabilities are typically add-ons rather than core execution loops. |

Reliability | Reliability is sensitive to data volume and ETL complexity; large-scale performance and operational overhead are common friction points. |

Trust | Supports governance patterns typical of BI platforms; depth varies by deployment and how rigorously controls are configured. |

Scale | Can scale for many BI use cases, but performance and cost can become constraints at very large scale. |

Cost | Consumption-based pricing can be unpredictable and can spike with heavy pipeline + dashboard workloads. |

Coverage | Strong breadth; “agentic” depth is less mature compared to platforms built specifically for autonomous analysis loops. |

7. Snowflake Cortex

Best for: Snowflake-first enterprises that want governed agentic analytics natively inside Snowflake

Snowflake Cortex extends Snowflake into agentic analytics through Cortex Analyst, Cortex Search, and Cortex Agents. Its core strength is that AI reasoning happens inside the Snowflake platform, close to governed enterprise data, rather than through an external analytics layer.

Dimension | How Snowflake Cortex performs |

|---|---|

Quality | Strong for governed natural-language analytics on Snowflake data; depends on semantic layer quality. |

Reliability | More reliable than raw NL2SQL, but still bounded by context coverage and permissions setup. |

Trust | Strong Snowflake-native governance and security posture. |

Scale | Scales well for Snowflake-centric enterprises; less ideal across fragmented stacks. |

Cost | Efficient for Snowflake-first teams, though consumption can rise with heavy usage. |

Coverage | Broad inside Snowflake; weaker outside the ecosystem. |

How to Choose

Most platforms overlap in functionality. The real differences appear in how each system addresses core enterprise requirements such as semantic consistency, governance, and autonomous multi-step analysis.

Below is a decision matrix using the same dimensions as the per-tool tables above.

Platform | Quality | Reliability | Trust | Scale | Cost | Coverage |

|---|---|---|---|---|---|---|

Genloop | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ |

ThoughtSpot Spotter | ◐ | ✓ | ✓ | ✓ | ◐ | ◐ |

Databricks Genie | ◐ | ◐ | ✓ | ✓ | ◐ | ◐ |

Microsoft Fabric | ◐ | ◐ | ✓ | ✓ | ◐ | ◐ |

Sigma Computing | ◐ | ◐ | ✓ | ◐ | ◐ | ◐ |

Domo | ◐ | ◐ | ◐ | ◐ | ✗ | ◐ |

Snowflake Cortex | ◐ | ✓ | ✓ | ✓ | ◐ | ◐ |

FAQs

What is agentic data analysis?

Agentic data analysis is a way of working with data where a user asks a business question in natural language, and the system does more than just return a chart or generate SQL. It plans the analysis, runs the necessary queries, evaluates intermediate results, follows relevant lines of investigation, and iterates toward an answer with minimal back-and-forth. Instead of behaving like a dashboard or a one-shot chatbot, it behaves more like an analytical collaborator operating within governed enterprise data. It helps business users ask questions conversationally and does more of the analytical work automatically, investigating the data step by step to arrive at reliable answers.

What is the difference between agentic BI and traditional BI?

Traditional BI waits for a question and returns a result. Agentic BI plans the analysis, runs it across data sources, and surfaces conclusions autonomously. The difference is not a better interface. It is a fundamentally different execution model.

Why do most enterprise AI analytics pilots fail?

Under the hood, most of these systems rely on complex Text-to-SQL generation architectures to translate natural language questions into governed queries. They hit the context gap. NL2SQL without a semantic layer infers meaning from raw schema. In a production environment with hundreds of tables and competing metric definitions, that inference breaks fast. The agent returns confident answers to the wrong question.

What should enterprises look for in an agentic analytics platform?

There are six dimensions that actually matter in production: quality, reliability, trust, scale, cost, and coverage. Systems delivering this need have a governed context layer, AI-native architectures with BI disciplines, grounding reasoning, deterministic execution, and learning to evolve with the enterprise. Everything else is demo-stage capability.

Is conversational analytics the same as agentic BI?

No. Conversational analytics lets users query data in natural language. Agentic BI goes further: the system plans multi-step analysis, investigates follow-up questions autonomously, and acts on what it finds. Most conversational analytics tools are not genuinely agentic.